- cross-posted to:

- android@lemdro.id

- cross-posted to:

- android@lemdro.id

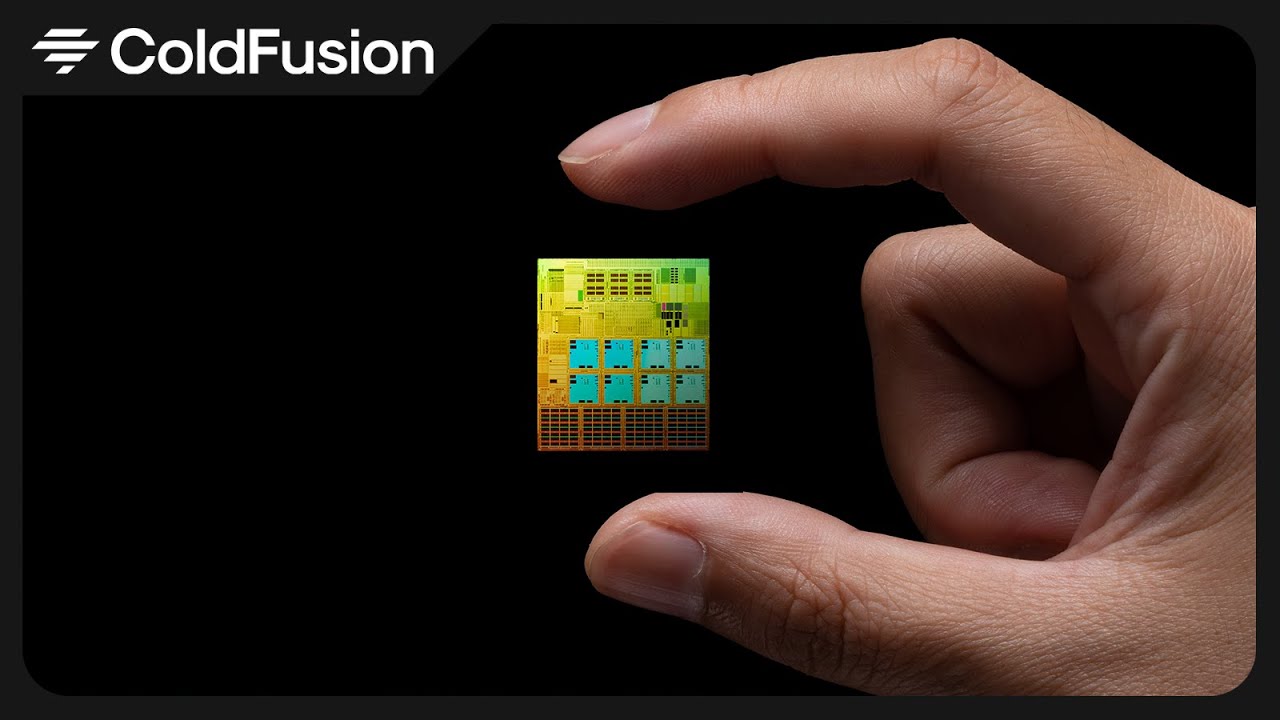

Qualcomm brought a company named Nuvia, which are ex-Apple engineers that help designed the M series Apple silicon chips to produce Oryon which exceeds Apple’s M2 Max in single threaded benchmarks.

The impression I get is than these are for PCs and laptops

I’ve been following the development of Asahi Linux (Linux on the M series MacBooks) with this new development there’s some exciting times to come.

I’m just eager to know how much laptops will cost with the new Qualcomm chip. I don’t want to pop champagne too early only to realize that new ARM laptops cost $2000.

I’d expect them to start around 1k. Not many people are going to be buying these devices so there’s no economies of scale.

Also I love how qualcomm announced this CPU and a day later Apple releases the M3 which is finally a real upgrade from the M1.

1k like the Macbook air or 1k with actually good memory and storage specs?

or gasp something mildly modular you can upgrade if you need to.

Lots of tech companies might be interested. For example, at my work we are now stuck half way between x64 and arm, both on the server side and on the developers side (Linux users are on x64 and Mac users are on arm). While multiarch OCI/docker containers minimize the pains caused by this, it would still be easier to go back to a single architecture.

Qualcomm chip won’t be binary compatible with Apple chips, so nothing will change for you.

If you build a docker image on an ARM Mac OS with default settings it will happily run on Linux on ARM, the same for a Go app compiled with

GOOS="linux", for example. Of course you can always fix the issues that pop up by also specifying the architecture, but people often forget, and in the case of docker it has significant performance penalties.

I’m sure Qualcomm knew what they were doing

New tech always comes at a cost, hopefully with the many manufacturers partnering with Qualcomm in this project we’ll have competitive pricing better than the current offering that Apple silicon provides.

Used to be, each year-ish computers got faster AND cheaper. So, it doesn’t “always” have to be that way.

That’s not happening anymore due to real world constraints, though. Dennard scaling combined with Moore’s Law allowed us to get more performance per watt until around 2006-2010, when Dennard scaling stopped applying - transistors had gotten small enough that thermal issues and other current leakage related challenges meant that chip manufacturers were no longer able to increase clock frequencies each generation.

Even before 2006 there was still a cost to new development, though, us consumers just got more of an improvement per dollar a year later than we do now.

Youre right, just like the first risc-v laptop which was more than 1k with awful performances. This will probably follow the M series trend at about 1,5k , but arm has a lot of competitors…

ARM laptops cost $2000

“Mx Max” already costs $3000, right? $2000 is still cheaper compared to the “MAX” version.

deleted by creator

I kind of agree, in that ARM is even more locked down than x86, but if I could get an ARM with UEFI and all computational power is available to the Linux kernel, then I wouldn’t mind trying one out for a while.

But yes, I can’t wait for RISC-V systems to become mainstream for consumers.

Could you explain how its more locked up?

Generally speaking, and I’m not talking about your Raspberry Pi’s, but even there we find some limitations for getting a system up and booting - and it’s not for lack of transistors.

But say if you take a consumer facing ARM device, almost always the bootloader is locked and apart of some read only ROM - that if you touch it without permission voids your warranty.

Compare that with an x86 system, whereby the boot loader is installed on an independent partition and has to be “declared” to the firmware, which means you can have several systems on the same machine.

Note how I’m talking about consumer devices and not servers for data centres or embedded systems.

Interesting, so you cant just use any Bootloader on Arm Linux? Like systemd-boot or grub2?

I think you’ll be waiting a pretty long time for high end RISC-V CPUs, unfortunately. I don’t particularly trust Qualcomm, but I’m really hoping to see some good arm laptops for Linux.

That’s fine. We got our powerful computers to work with until then.

See milk v pioneer if you need high end risc-v PC

You don’t trust… a company that licenses an ISA?

When your current alternative is a duopoly spearheaded by Intel?

deleted by creator

That’s worse!

edit: Actually it’s also incorrect, since Nvidia is making ARM chips, not x86.

Same. I’d love it if RISC-V came out with a competing chip.

You might wait for a long time if America bans RISC-V development.

And computing might be hard if Godzilla eats all the power stations.

deleted by creator

I hope for Microsoft to just give up and build a new "windows“ which is just an other Linux distro xD

Ducking windows can’t even clone the Linux kernel right now

IIRC Microsoft’s woes in the ARM space is two-fold. First is the crushing legacy compatibility and inability to muster developers around anything newer than win32, and second was signing a deal to make Qualcomm the exclusive ARM processors for Windows for who knows how long.

Deal is going to expire in 2024!

That’d be based, but I don’t think there’s anything in that for them.

They’re a platform company that provides services. They could build proprietary services on top of a Linux distro. Basically the same as they’re doing now with Edge.

Well, I’m sure they find a way.

They’ll probably sooner embrace-extend-extinguish Linux with WSL

Qualcomm you say?

I’ll believe it when it ships

Qualcomm is my main fear also. They will ship it with lots of closed source firmware digitally signed with their private keys which users can’t replace so expect a shitty bootloader and don’t forget about always running hypervisior, trust zone and world most kept secret modem

[This comment has been deleted by an automated system]

don’t care about absolute performance, more interested in performance/watt

I’m more interested in something that has an actual hardware and software ecosystem. I’m no longer interested in soldering my computer and it’s peripherals together.

“The real winner is the one who loses the most” indeed.

Would definitely upgrade to that instead of my current Lenovo. I want x86 to die already.

If you want to kill x86, you need to do what Valve and the Wine foundation did with Proton/WINE (mostly proton at this point though), but for x86 to ARM and maybe other architectures like RISCV (especially because the milkV pioneer is a thing).

There is too much legacy software that will never be converted that people still use to this day. Once you make it easy to transition, it will slowly but steadily start to happen.

Box86/Box64 are promising, but need help from contributors like you. If you want it to happen, go make it happen, or continue to live in the world you have now.

Well, you do have qemu, which can run x86 programs on other architectures (not just running x86 virtual machines on top of hosts of other architectures).

My experience running arm on x86 with qemu was dog slow. This was years ago, though, so hopefully it has gotten better.

Well legacy software is fine, that stuff mostly runs on old machines/servers/etc. ARM will be more easily to move towards by focusing the consumer market, where legacy issue is less of an issue because their programs are frequently updated. Some old server using outdated software that people are afraid to touch, we don’t need to worry about converting that lol.

As long as memory and ssd are upgradable and not soldered on the board, I would buy this laptop

The limited benchmarks I’ve seen put the new X Elite at slightly less efficient than the M2 Pro (let alone M3 Pro). It only gets marginally higher scores when operating at 3x the wattage.

Also, let’s not imagine even for a second that notoriously terrible ARM are going to make it easy to support this chip, especially not in the long term.

I don’t wanna repeat myself, but: 7840u for the next few years, then I hope RISC V will be mature enough to kick some ass (and that framework releases a board for it).

That’s all I dream of.

Check out the milkV Pioneer.

The benchmarks for the M3 have the single core and multicore performances way past similar Intel and AMD chips. Qualcomm’s mobile chips are still no where near Apple’s mobile chips. I do not believe for a second that Qualcomm will catch up to the M2 on their first release.

That’s absolutely not true. The M3 Max just about brings Apple performance up to similar levels as Intel and AMD. The Ryzen 9 7945HX3D for example is a laptop processor which trades blows with the M3 on benchmarks - single core the M3’s slightly faster and multi core the Ryzen’s slightly faster - and in performance per watt the Ryzen’s marginally better. So really it’s just catching up with older laptop processors from other manufacturers.

And if you venure outside the laptop space to compare ultimate speed it’s nowhere near the fastest, particularly in multi-threaded. Its multi-threaded performance is around 13% of the AMD EPYC 9754 Bergamo for example.

Keep in mind this is with up to an 80 watt TDP vs an effectively 3 year old architecture in a select few tests. The M2 was basically just an overclocked M1, with the Pro/Max models getting 2 extra cores. This is qualcomms best case scenario.

Apple cooks only with water too.

The Qualcomm x elite benchmarks as faster than the M3 for multicore. Not too surprising as I think it’s 12 cores vs 10. For single core its something like 2700 vs 3200.

Laptops running x elite are supposed to be available mid 2024.

M3 is not faster than any AMD or Intel. And PC chips are using very old process nodes. Once Intel gets to 2nm, Apple chips will feel like dumbphone SOCs from the early 2000-s running J2ME applets.

deleted by creator

They have moved to 10nm nodes which have density of 7nm nodes of TSMC afaik. They call these nodes Intel 7.

Afaik, Intel is also planning to move Intel 5 and other nodes.

10nm.

Once Intel gets to 2nm

So in like 10 years from now?

Who knows? Intel works in mysterious ways.

If I can get decent performance on a tablet style laptop for cheap I would buy it new

It’s interesting as a comparison to M3 now and at different power limits. I’m hoping it may hopefully benefit the asahi project also. As a windows product I don’t think it’ll be good at all unless Microsoft has a Rosetta like emulation layer that is nearly as good as Apple. Without that this product will not do well.

Microsoft has a pretty good translation layer, it’s the hardware x86 acceleration that most windows ARM chips lack, that Apple’s CPUs have.

I see a bunch of lawsuits in the future. Because that’s what big companies do.

They don’t compete, they sue or buy out.

I was gonna say the same thing. I sense claims of stolen IP soon

Here is an alternative Piped link(s):

https://piped.video/V68RE0M8zhk?si=BI7PINVfg4TE_LNb

Piped is a privacy-respecting open-source alternative frontend to YouTube.

I’m open-source; check me out at GitHub.

I can’t wait for the hardware Android continuity… that’s the only thing I’m waiting on now to switch to Android besides the raw performance being equal.

Hardware continuity, what do you mean?